Artificial intelligence (AI) ethics is a hot topic in South Korea. Various problems such as the personality created by artificial intelligence, the wrong behavior of the people who use it, and the ethics of companies that create artificial intelligence have been poured out at once.

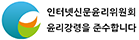

What has been a problem recently is 'Lee, luda', an artificial intelligence (AI) chatbot. 'Lee, luda' is developed based on a young woman. 'Lee, luda' also naturally uses buzzwords, and new words that young people use.

However, some people used sexual expressions during conversation. There are also stories that ‘Lee, luda’ said hate speech against LGBTI people or people with disabilities. Chatbots use big data to communicate with users. However, the hate speech contained in the big data were not be filtered out.

The same thing happened in 2016 when Microsoft created an artificial intelligence chatbot 'Tay' that was developed based on a teenage girl. ‘Tay’ uncritically learned what people said. And ‘Tay’ learned racist remarks, sexual remarks, and even swear words. When the problem became serious, the developer of 'Lee, luda' stopped the service after 20 days of launch.

This situation clearly reveals the problems that arise as artificial intelligence services develop. In summary, there is nothing wrong with artificial intelligence itself. The problem is that people who create artificial intelligence and those who use artificial intelligence misuse big data that is the basis of artificial intelligence.

Artificial intelligence is a tool that makes people's lives convenient. When making automobiles or useful electronic products, safety is a very important factor. The same goes for artificial intelligence. It's important to create a fun and useful chatbot. However, it is more important to make sure that the artificial intelligence does not harm people physically or mentally.

The process of AI learning big data is greatly influenced by the rules created by developers. If the developer makes artificial intelligence filter out unethical and sexual expressions through appropriate filters in the learning process, this problem will not occur.

However, it is difficult to create a lively artificial intelligence chatbot in a short time while doing this. So the developer wants to skip that process. Artificial intelligence chatbots created in this way use inappropriate expressions such as 'Lee, luda' or 'Tay'. This is the responsibility of the developer.

Some users are also responsible. Because they tried to make unethical conversation with chatbot. This is similar to abusing small animals that cannot resist. Artificial intelligence chatbots always try to learn something from every conversation. Repeating unethical conversations is similar to teaching a baby swear words.

Google, Microsoft and IBM have declared that they will develop artificial intelligence that does not have social prejudices. As a company leading the IT industry, it is an attitude to respond properly before it becomes a big problem.

Personally, I am worried about South Korean companies. South Korean companies that do not have manpower or enormous funds can proceed with big data learning that emphasizes only efficiency. That could lead to more serious problems than the 'Lee, luda' situation. Both companies and users should try to use big data properly.

|

김창훈 기자 changhoon8@gamevu.co.kr

<저작권자 © 게임뷰, 무단 전재 및 재배포 금지>

넷마블, '나 혼자만 레벨업: 어라이즈' 체험기

넷마블, '나 혼자만 레벨업: 어라이즈' 체험기

![[현장] 김형태 대표 여의도에 강하! 시프트업 ‘스텔라 블레이드’ 행사](/news/thumbnail/202404/32567_86823_5854_v150.jpg)

![[포토] 카카오게임즈 '아키에이지 워', 대만 쇼케이스 성료](/news/thumbnail/202404/32529_86732_3127_v150.jpg)

![[포토] '오버워치 챔피언스 시리즈(OWCS)' 아시아 본선에 나서는 한국팀들](/news/thumbnail/202404/32461_86464_3811_v150.jpg)

![[포토] X-PLANET, 첫 단독 팬미팅 앞둔 ‘후뢰시맨’ 배우 입국 현장](/news/thumbnail/202404/32440_86387_5027_v150.jpg)